Smart Warehousing

By , 25 August 2021 14:14

Advantages of a cloud-native warehouse management software

Once we at Foysonis lead the way with a SaaS model in the WMS space in 2015 , even legacy dinosaur vendors were forced to sell their licenses in a subscription model. But from a technology standpoint, what they have done is put an old wine in a new bottle, move their old on-prem tech to an EC2 instance in AWS or Azure, and call themselves cloud-based.When we started building our WMS platform from scratch in 2015, we built it native to the cloud. A tech platform built for the future that will scale.

Introduction to Cloud Native

Cloud-native has become one of the hottest topics in the modern software development landscape. This has changed the way we develop and deploy software products. Basically cloud-native defines the approach of designing, developing, and running software products by utilizing the full advantages of the cloud-computing service model.

The Cloud Native Computing Foundation (CNCF) provides the official definition for cloud-native as below.

Cloud-native technologies empower organizations to build and run scalable applications in modern, dynamic environments such as public, private, and hybrid clouds. Containers, service meshes, microservices, immutable infrastructure, and declarative APIs exemplify this approach.

These techniques enable loosely coupled systems that are resilient, manageable, and observable. Combined with robust automation, they allow engineers to make high-impact changes frequently and predictably with minimal toil.

Why do we need cloud-native architecture?

Time-to-market has become the key differentiator between innovative companies and their lagging competitors. Cloud-native applications provide the speed and agility required by the companies to stay competitive in their respective industry. This helps to move from months to get a new feature to production to days or even hours. Scalability is another key advantage that comes with the cloud-native approach. As the business grows, it is important to support more users, more locations, and a wide variety of devices. Pay-as-you-go plans offered by the public cloud vendors are probably the obvious solution to increase the computing capacity as you scale your applications. This also helps to shift your expenses from CapEx to OpEx. Cloud-native architecture also provides you the ability to create more reliable applications. Cloud-native concepts like microservices and containerization help to build fault-tolerant applications with built-in self-healing capabilities.

The Foysonis team observed and analyzed the benefits of cloud-native architecture and decided to take advantage of it by designing and developing Foysonis WMS as a cloud-native SaaS application from its initial version. Foysonis selected AWS as their preferred cloud provider and has been using a number of AWS services including EC2, ECS, RDS, ElastiCache, ElasticSearch, S3, Aurora, SES, Code Build, and Code Pipeline to achieve the architecture.

Foundational Pillars of Cloud-Native

With the understanding of cloud-native as a concept, let’s now explore what the foundational pillars of a cloud-native application are.

Micro Services

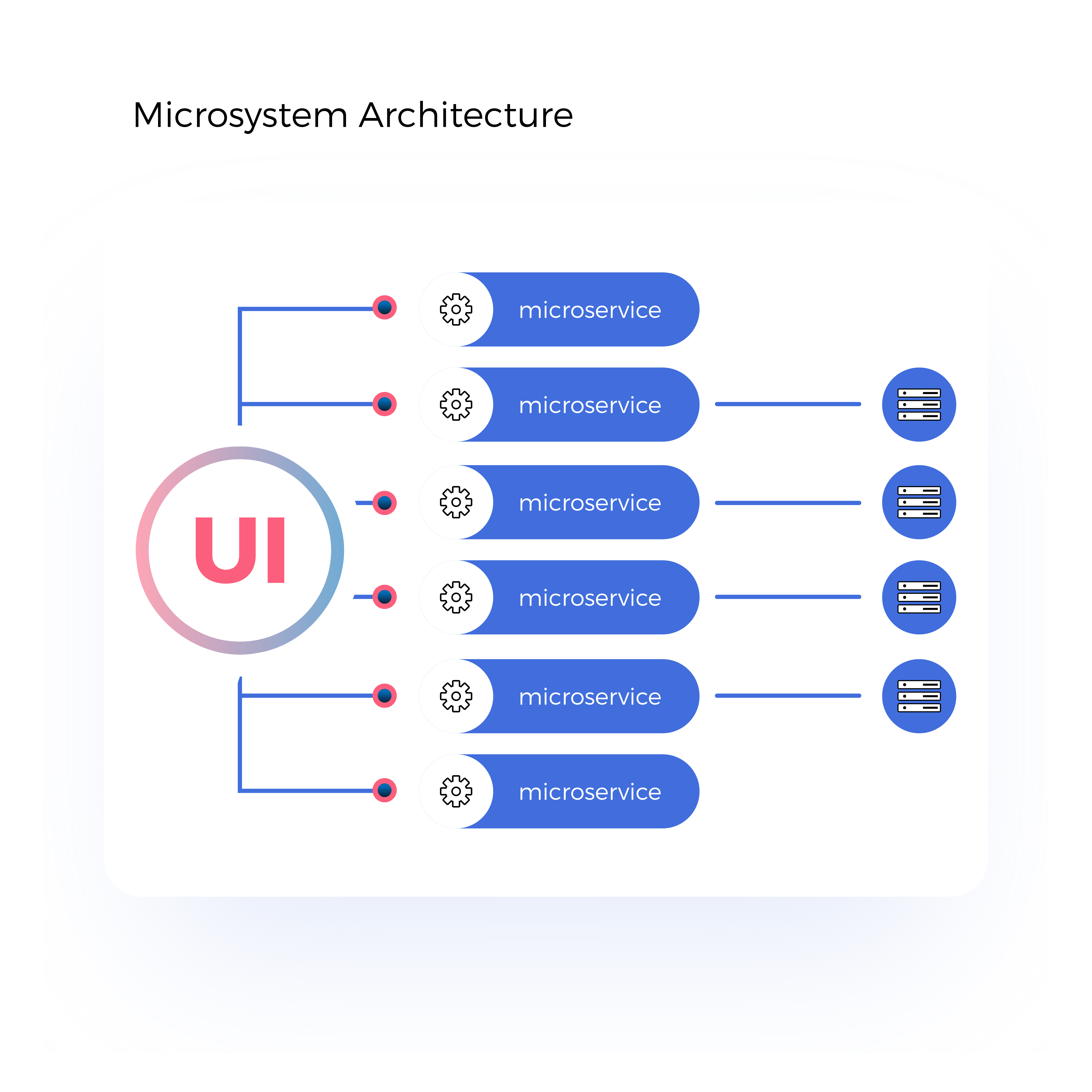

Cloud-native applications are developed using loosely coupled microservices, each implementing a specific business functionality, which will work together to create the whole application. These microservices run their own processes and communicate with each other using lightweight protocols such as HTTP/HTTPS.

Scalability is the key advantage of microservices architecture. Since each component of the application is deployed as a separate microservice, each microservice can be scaled independently without scaling the entire application. For example, in a WMS application, the inbound and outbound microservices can be scaled up during the peak period without scaling the reports microservice.

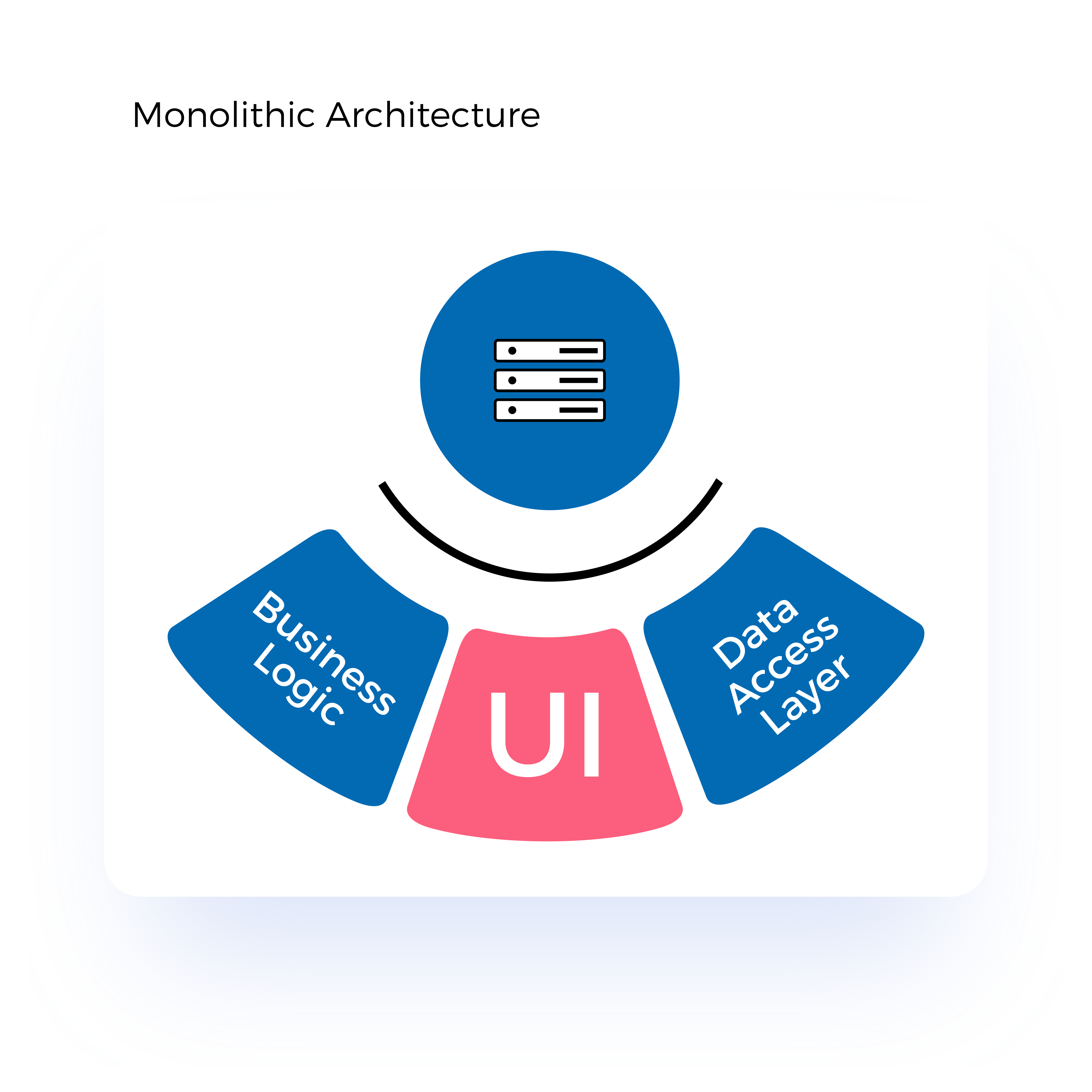

Figure 1: Monolithic Architecture vs Microservices Architecture

Figure 1 illustrates the differences between monolithic architecture and microservices architecture. With the monolithic architecture, all the components, including the user interface, data access layer, and business logic, are grouped into one single application. But with the microservices architecture, each component is segregated into an independent microservice, which communicates with each other.

Foysonis WMS applications are designed with a number of micro-services working together to deliver complete functionality to the end-user. This includes micro-services for each module such as receiving, inventory, orders, users, and reports.

Containers

Containers can be used in conjunction with Microservices to create portable and scalable cloud-native applications. Each microservice can be packaged into a separate container that can be deployed independently. Since each container encapsulates all the dependencies required to run the application, it improves the portability and guarantees consistency across environments. These containers can be deployed into any environment where the container runtime is available.

Docker is the most famous container runtime, but there are a few other options available such as RKT, Containerd, and Mesos.

Once there are a large number of containers, it becomes very hard to manage them without a proper container management tool. Container management can be done with container orchestration tools. These container orchestration tools provide a number of services to the containers such as scheduling, service discovery, auto-scaling, self-healing, and rolling updates. While there are a number of tools available, Kubernetes has become the de-facto standard for container orchestrators.

Foysonis WMS was initially deployed on EC2 instances, which is AWS’s virtual machine-based infrastructure. Later, this deployment pattern was migrated to use the containerized model with Docker to enhance the portability of the Foysonis WMS application in different environments. Foysonis WMS Docker containers are deployed on Amazon ECS, which is a fully managed container orchestration service by AWS. ECS offers features such as auto-scaling, scheduling, container auto-recovery, service discovery, security, load balancing, and monitoring.

Modern Design

Cloud-native applications should be designed in such a way that they are reliable, scalable, portable between environments, and allowed to continue integration and deployment. Twelve-factor app design is a widely accepted methodology for designing cloud-native applications. These concepts can be applied to applications written in any programming language.

Below are the twelve factors developers should focus on when developing cloud-native applications.

- Codebase – A single codebase tracked in a source control system that can be deployed into multiple environments (dev, QA, staging, production)

- Dependencies – Each microservice should declare and isolate their dependencies

- Config – Configurations should be stored external to the microservices

- Backing services – Backing services such as databases, caches, message queues should be attached resources exposed via addressable URLs

- Build, release, run – Each release must have a strict separation between build, release and run stages.

- Processes – Each microservice should have an isolated stateless process with the state is stored in an external backing service.

- Port binding – Each microservice should expose their functionality through port binding

- Concurrency – Microservices should scale-out horizontally rather than vertically.

- Disposability – Services should be disposable with fast startup and graceful shutdown

- Dev/prod parity – Each environment in the application lifecycle (dev, QA, staging, production) should be identical as possible

- Logs – Logs should be treated as event streams

- Admin processes – Admin tasks should be a one-off process

Foysonis WMS has been designed in adherence to the twelve-factor app model. There is a clear separation between each module of the application using microservices. Each microservice has exposed an API that can be invoked by the consumer services. Foysonis WMS uses several backing services such as RDS and Redis which are exposed via addressable URLs. Additionally, we are maintaining a dev/prod parity by having production identical setups for the QA and pre-production environments.

Automation

Automation is another core pillar of cloud-native applications. This enables us to both create the application deployment infrastructure and deploy applications in a more automated, consistent, and reliable manner.

Infrastructure as code allows managing the infrastructure (virtual machines, databases, load balancers, etc) using configuration files. This will allow all the configurations related to the infrastructure to be stored in a version control system similar to the source code. With this, we can apply software engineering practices such as testing and version control to the infrastructure as well. Terraform is one of the most famous tools to implement infrastructure-as-a-code.

With the infrastructure provisioning is automated with infrastructure-as-a-code tools, the next step is to automate the application deployments with continuous integration and continuous deployment tools.

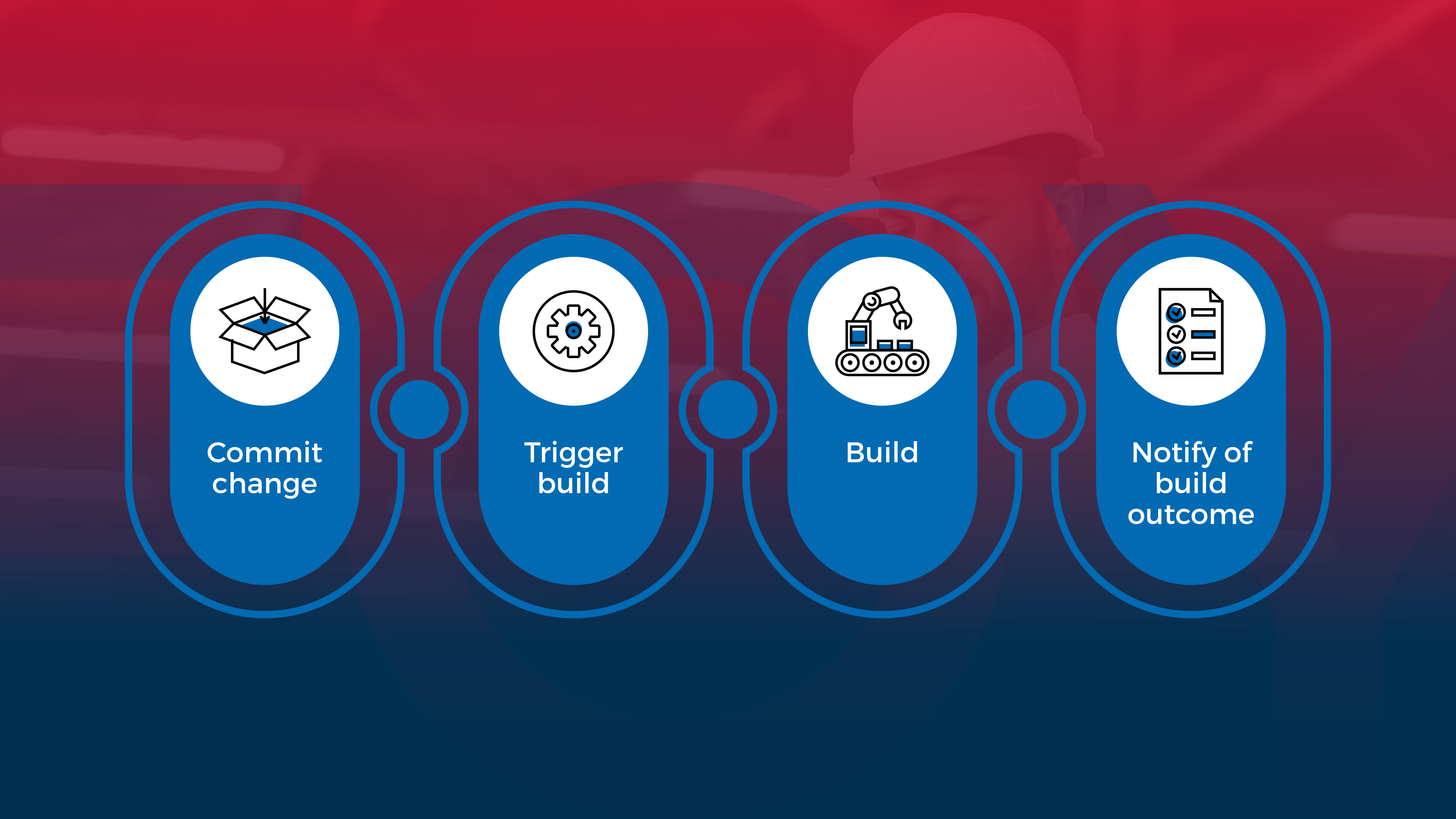

Figure 2: CI/CD Pipeline

The CI (continuous integration) part of the CI/CD pipeline is focused on transforming the source code into a binary artifact. This process is normally triggered when a new code is committed to the source code repository. Tasks such as unit tests and static code analysis will be executed in the CI stage of the pipeline.

The CD (continuous delivery) part of the CI/CD pipeline is focused on deploying the binary artifact produced by the CI into different environments such as QA, pre-production, and production. Tasks such as integration testing, load testing, and UAT will be executed at this stage of the pipeline.

Foysonis is using automation whenever possible to increase the efficiency of the software delivery lifecycle. AWS Code Build and AWS Code Pipeline are used to create the CI/CD pipeline for Foysonis WMS, which has the capability to deploy new versions of the applications seamlessly to the end-user without them noticing any downtime. All the cloud infrastructure of Foysonis deployments will be created with infrastructure-as-a-code tools such as Terraform. Additionally, we have implemented a modern monitoring and telemetry system, which are capable of capturing all the anomalies within the system. This allows the Foysonis operations team to capture and correct the anomalies within the system before they become customer-impacting.